Relation-Aware Graph Learning with Mixture-of-Experts Prediction for Cognitive Diagnosis

Jingwei Qu1

Mingze Zhang1

Pingshun Zhang1

Li Tao1

Ying Wang1

Zhaofang Yang1

Haibin Ling2

1Southwest University 2Westlake University

[PDF]

[Code]

Abstract

Cognitive diagnosis aims to infer students’ concept-level mastery from their exercise response logs and exercise-concept associations. Fully leveraging holistic heterogeneous relations and modeling the substantial variations in student mastery and exercise difficulty remain challenging, especially when prediction relies on a single predictor. To address these challenges, we propose RMCD, a unified cognitive diagnosis model that integrates relation-aware graph learning with Mixture-of-Experts (MoE) prediction. RMCD constructs a heterogeneous relational graph over students, exercises, and concepts with multiple relation types, and employs a relation-aware graph encoder that learns node and edge representations simultaneously. The encoder further derives relation-strength vectors from student-concept and exercise-concept edges to differentiate relation effects and refine node representations, enabling effective relation learning. On top of the learned representations, RMCD introduces an MoE-based prediction head that adaptively combines multiple expert predictors conditioned on the three-entity representations, thereby capturing diverse mastery-difficulty discrepancies and alleviating the limitation of a unified predictor. Extensive experiments on benchmark datasets demonstrate that RMCD consistently outperforms state-of-the-art cognitive diagnosis methods.

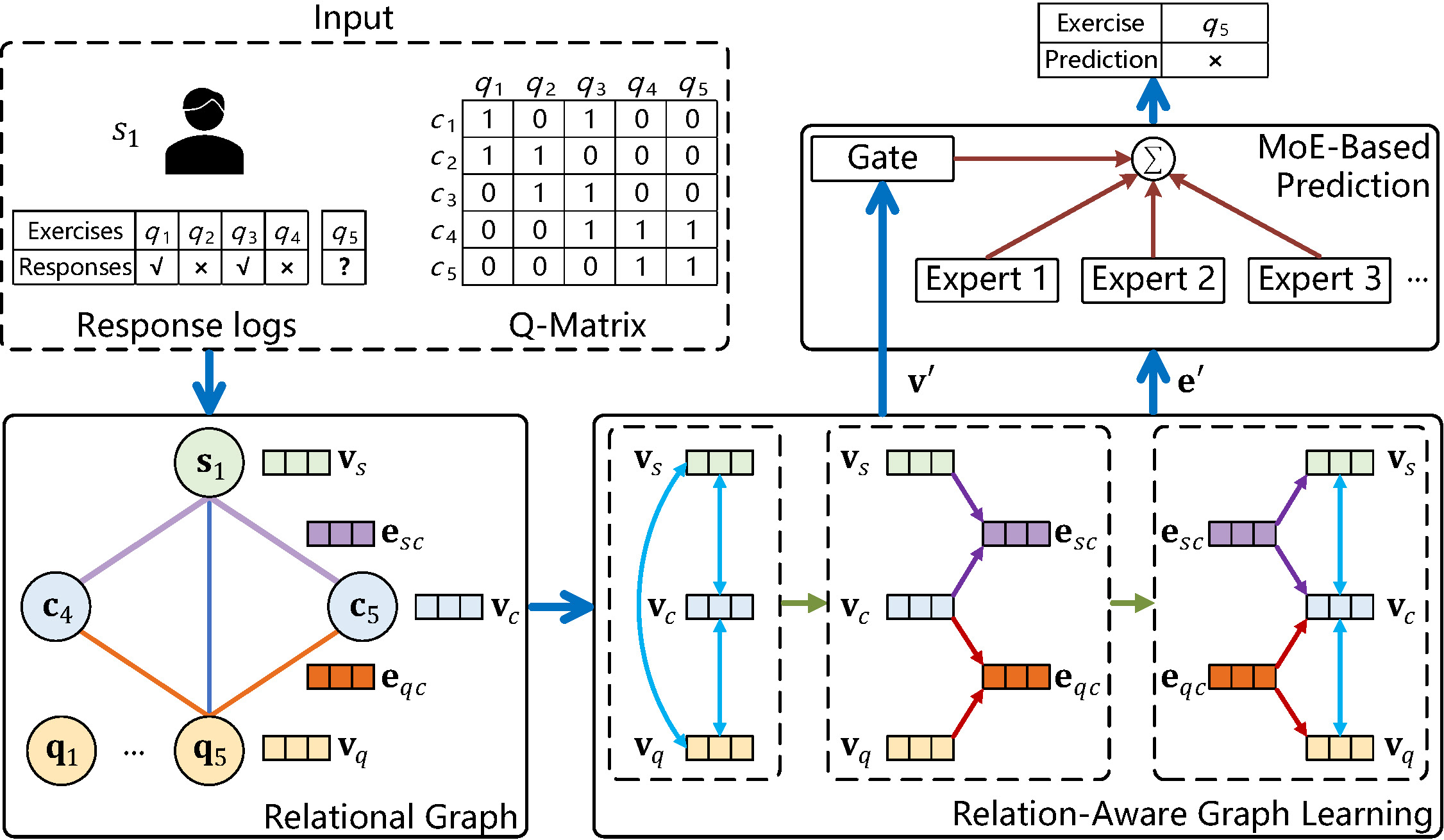

Conceptual illustration of RMCD

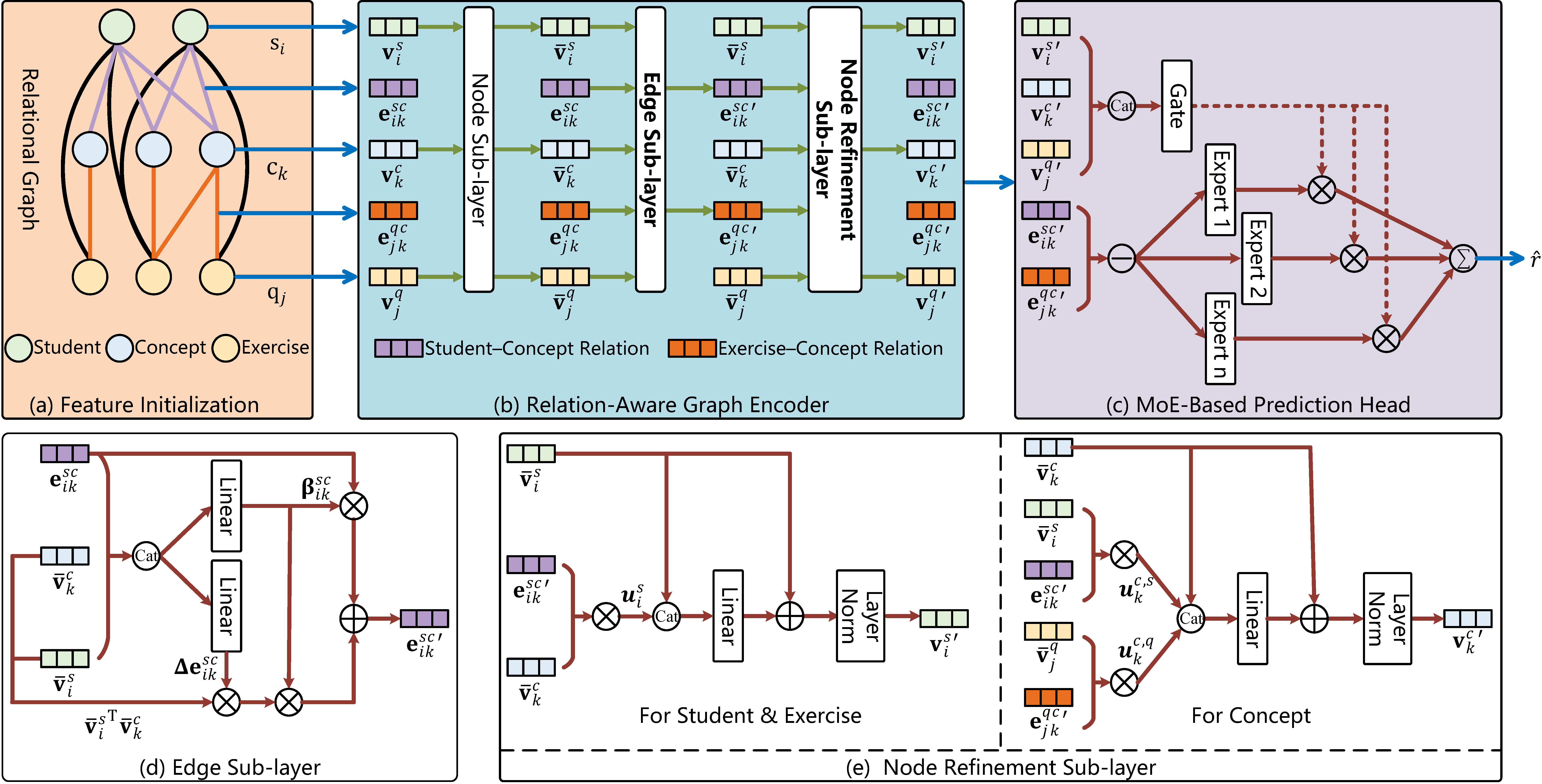

Architecture of RMCD

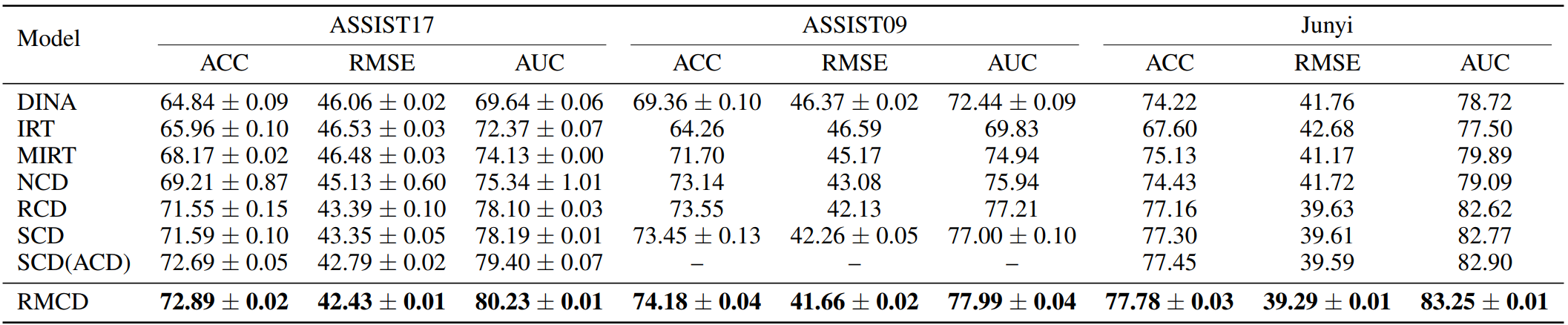

Quantitative Results

Comparison of cognitive diagnosis performance on the ASSIST17, ASSIST09, and Junyi datasets. All metrics are reported in %; lower RMSE and higher ACC/AUC are better. Numbers in bold indicate the best performance.

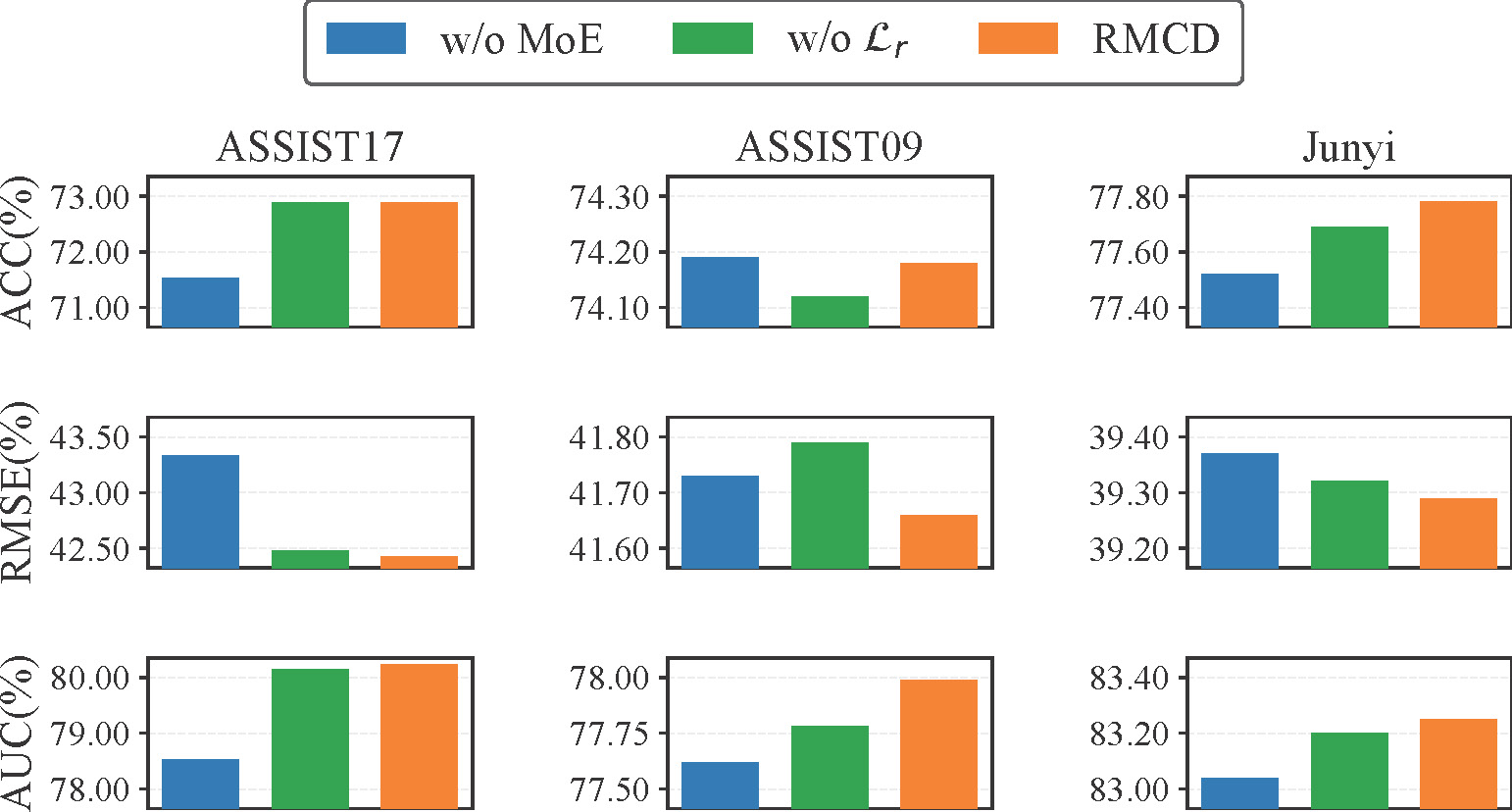

Ablation Study

Ablation study of the MoE head and the regularizer \(\mathcal{L}_r\).

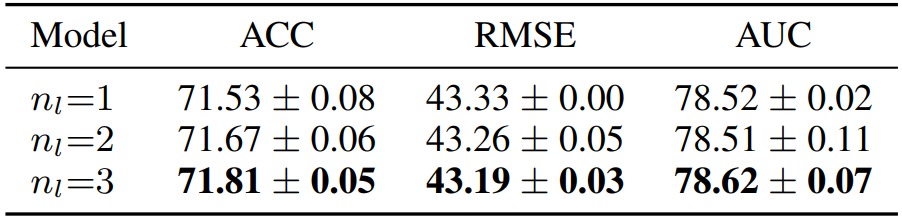

Ablation study of the relation-aware graph encoder depth on ASSIST17 (w/o MoE).

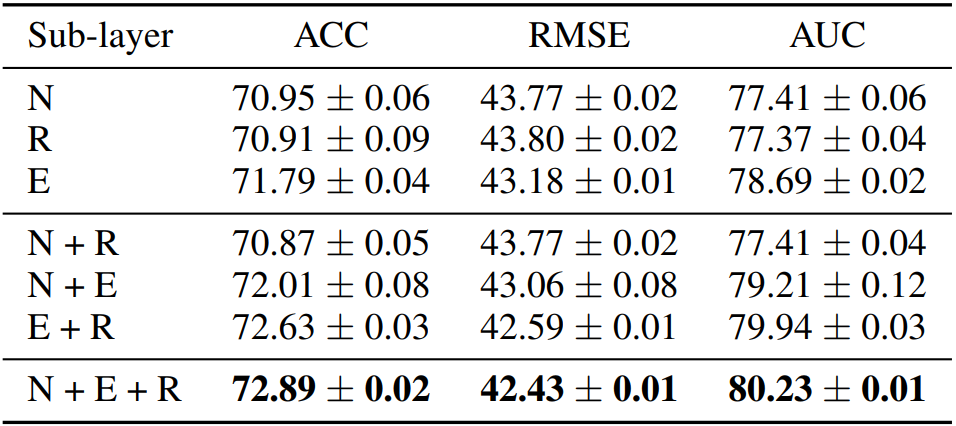

Ablation study of the sub-layer roles on ASSIST17.

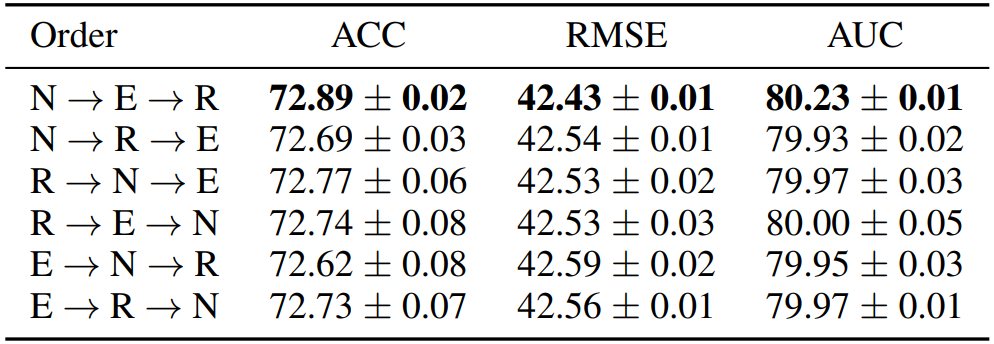

Ablation study of the sub-layer order on ASSIST17.

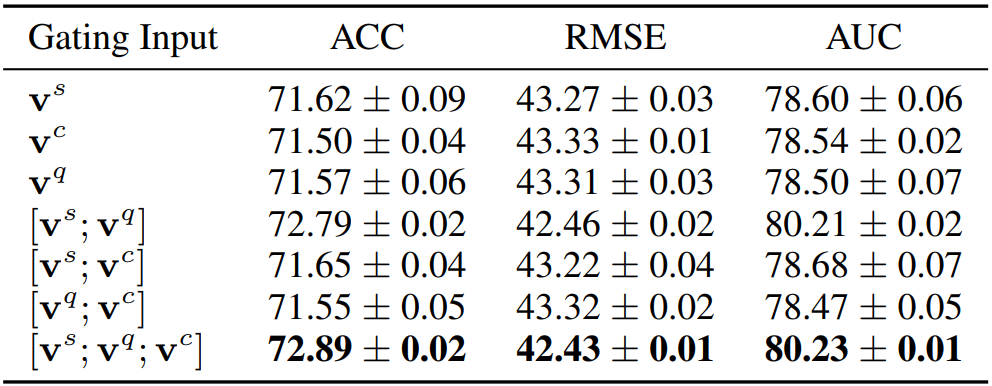

Ablation study of the gating input on ASSIST17.

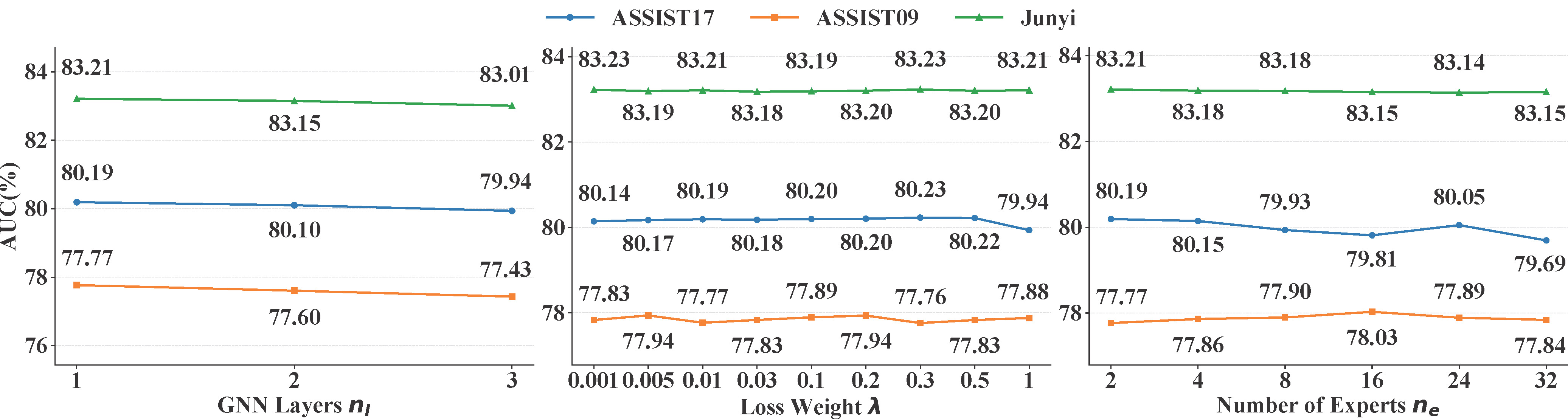

Ablation study of the key hyperparameters \(n_l\), \(\lambda\), and \(n_e\).

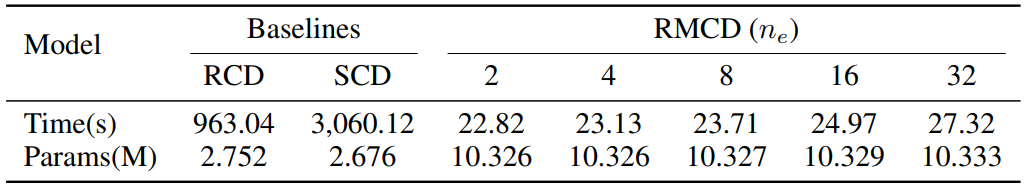

Efficiency comparison between baselines and RMCD with different numbers of experts on ASSIST09.

Reference

@inproceedings{qu2026relation,

|